Mixed Data Verification – Perupalalu, 5599904722, 9562871553, 8594696392, 6186227546

Mixed Data Verification for Perupalalu involves aligning heterogeneous data across content, structure, and semantics to produce trustworthy analytics. The process emphasizes modular, reproducible validation workflows, explicit provenance, and standardized metadata registries. It addresses schema misalignment and opaque provenance through schema contracts and incremental reconciliation, enabling scalable validators. Governance, documentation, and auditable rules support consistent decision criteria. The challenge lies in balancing flexibility with discipline, inviting a careful examination of how these elements cohere as data sources evolve.

What Mixed Data Verification Is and Why It Matters

Mixed data verification refers to the systematic process of confirming that data collected from heterogeneous sources align in content, structure, and semantics, while also identifying and reconciling discrepancies across datasets.

The practice supports data integration and reinforces data governance by documenting provenance, validating consistency, and guiding reconciliation strategies, enabling organizations to confidently combine diverse records and sustain trustworthy analytics, reporting, and strategic decision-making.

Designing a Robust Verification Framework for Perupalalu and Similar Datasets

Developing a robust verification framework for Perupalalu and similar datasets requires a structured, repeatable approach that anticipates heterogeneity in source data.

The framework emphasizes Structured Validation workflows, reproducible checks, and modular components that integrate provenance metadata.

Data Provenance is captured at each stage, enabling traceability, auditability, and consistent decision criteria across sources, formats, and temporal updates.

Common Pitfalls in Heterogeneous Data Reconciliation and How to Avoid Them

Common pitfalls in heterogenous data reconciliation arise from misaligned metadata, inconsistent schemas, and opaque provenance trails. To avoid them, practitioners implement explicit schema contracts, traceable lineage, and standardized metadata registries.

Inconsistent schemas hamper cross dataset matching, causing brittle joins and hidden errors. Rigorous validation, incremental reconciliation, and clear governance reduce divergence, enabling reliable, auditable integration across disparate data sources with preserved context and freedom to evolve.

Techniques and Best Practices for Scalable, Flexible Validation Across Formats

Techniques for scalable, flexible validation across formats emphasize modularity and adaptability, enabling heterogeneous data to be verified without rigid, one-size-fits-all schemas. The approach prioritizes interoperable validators, incremental integration, and clear data provenance, ensuring traceable lineage across pipelines.

Emphasizing data integration, it promotes repeatable checks, auditable rules, and documented assumptions, fostering freedom through disciplined, reproducible validation workflows across diverse data sources and formats.

Frequently Asked Questions

How Do Privacy Concerns Affect Mixed Data Verification?

Privacy implications constrain mixed data verification by mandating minimized data collection, rigorous governance, and transparent processes; data consent must be explicit, revocable, and documented, while verification methods balance accuracy with user autonomy and freedom of choice.

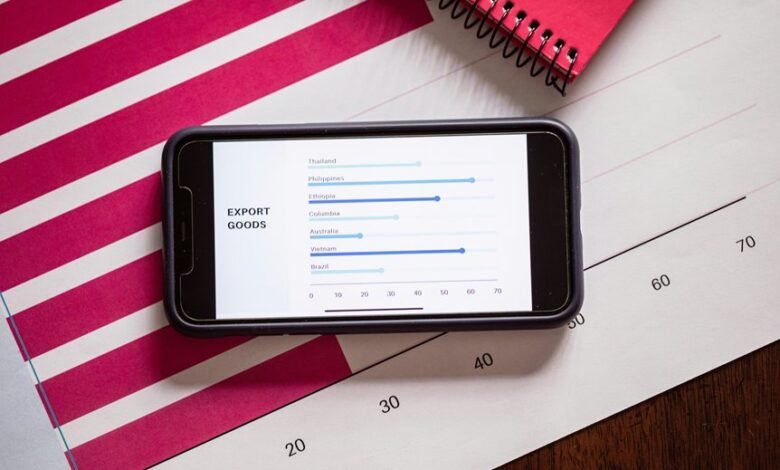

What Metrics Best Assess Cross-Format Data Reliability?

A tightrope walk across formats reveals that accuracy, precision, and reproducibility best measure cross-format data reliability. It emphasizes data governance and data lineage, with systematic audits, versioning, and anomaly detection guiding disciplined, freedom-minded data integrity practices.

Can Verification Scale to Real-Time Data Streams?

Verification scalability for real-time streams is feasible with Mixed Data checks, provided privacy concerns are mitigated; Data Reliability Metrics track Missing Values and timeliness, while Collaborative Tools enable practical verification workflows, though privacy safeguards and throughput trade-offs require careful design.

How to Handle Missing or Corrupted Values Effectively?

Handling missing values and Corrupted values involves robust imputation, validation, and anomaly detection; privacy concerns are prioritized, with cross format metrics guiding consistency; real time scalability relies on streaming pipelines and collaborative tools for transparency and adaptability.

Which Tools Support Collaborative Data Validation Workflows?

“Many hands make light work.” The discussion identifies tools supporting collaborative data validation workflows, emphasizing data governance and data provenance, with methodical, meticulous approaches suitable for flexible teams pursuing transparent, controllable collaboration across venues and datasets.

Conclusion

The study demonstrates that mixed data verification thrives on modular, provenance-aware workflows and standardized metadata registries. By aligning content, structure, and semantics through explicit contracts and incremental reconciliation, it builds auditable, repeatable checks across diverse sources. The framework functions like a well-tuned mechanism, tracing each validation step with clarity. In short, disciplined governance and reproducible methods illuminate hidden inconsistencies, guiding reliable analytics amid heterogeneous data landscapes.